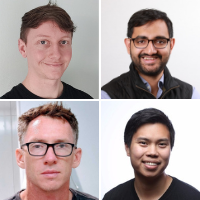

This week on The Data Stack Show, Eric and Kostas chat with Jeff Chao, a staff engineer at Stripe, Pete Goddard, the CEO of Deephaven, Arjun Narayan, co-founder and CEO of Materialize, and Ashley Jeffs, a software engineer at Benthos. Together they discuss batch versus streaming, transitioning from traditional data methods, and define “streaming ETL” as they push for simplicity across the board.

Highlights from this week’s conversation include:

The Data Stack Show is a weekly podcast powered by RudderStack, the CDP for developers. Each week we’ll talk to data engineers, analysts, and data scientists about their experience around building and maintaining data infrastructure, delivering data and data products, and driving better outcomes across their businesses with data.

RudderStack helps businesses make the most out of their customer data while ensuring data privacy and security. To learn more about RudderStack visit rudderstack.com.

Each week we’ll talk to data engineers, analysts, and data scientists about their experience around building and maintaining data infrastructure, delivering data and data products, and driving better outcomes across their businesses with data.

To keep up to date with our future episodes, subscribe to our podcast on Apple, Spotify, Google, or the player of your choice.

Get a monthly newsletter from The Data Stack Show team with a TL;DR of the previous month’s shows, a sneak peak at upcoming episodes, and curated links from Eric, John, & show guests. Follow on our Substack below.