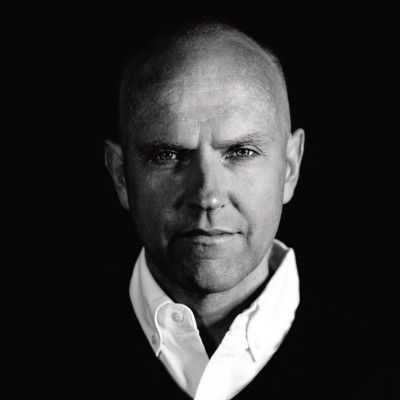

This week on The Data Stack Show, Eric and Kostas chat with Lars Kamp, the Co-founder & CEO at Some Engineering. During the episode, the group talks about the struggles of resource management in organizations today. Topics also include discussion on the trend of large resources to micro resources, managing costs through scale and experimentation, complexities of data infrastructure, how data teams can plan for costs in the future, and more.

Highlights from this week’s conversation include:

The Data Stack Show is a weekly podcast powered by RudderStack, the CDP for developers. Each week we’ll talk to data engineers, analysts, and data scientists about their experience around building and maintaining data infrastructure, delivering data and data products, and driving better outcomes across their businesses with data.

RudderStack helps businesses make the most out of their customer data while ensuring data privacy and security. To learn more about RudderStack visit rudderstack.com.

Eric Dodds 00:03

Welcome to The Data Stack Show. Each week we explore the world of data by talking to the people shaping its future. You’ll learn about new data technology and trends and how data teams and processes are run at top companies. The Data Stack Show is brought to you by RudderStack, the CDP for developers. You can learn more at RudderStack.com. Welcome back to The Data Stack Show. Kostas, another new topic. This has been a great spring and early summer, chatting about things that we haven’t really covered on the show a ton before. Today, we’re talking with Lars Kamp, and we’re going to talk about resource management, which is everyone’s favorite topic and the data stack. But for real, I think, you know, this is becoming a really big concern, especially in the macro economic climate. Understanding how to run a cost efficient data stack is becoming more and more critical. And it’s very difficult to do, because of the complexity of the systems, right? It’s not just about managing, you know, let’s say your warehouse computer bill, for example, right? Especially teams that run, you know, sort of large experimentation platforms and need to spin up and down resources. There’s a huge amount there. So Lars has worked with the team and has created a tool to help you do that, which is really fascinating. I want to start usually where we always start, which is the nature of the problem. Resource management is not something I have a ton of personal experience with. And it sort of goes deep into sort of DevOps, you know, SRE world, which is fascinating. So I think it’ll be fun to cover that topic on the show.

Kostas Pardalis 01:46

Yeah, horrible things. I won’t say much. The only thing that I would say is that, although it might sound that the topic today is not directly related to data, we are actually together with Lars going to prove that resource management is a data problem. So let’s go and do that.

Eric Dodds 02:06

That is a great teaser. Let’s dive in and talk with Lars. Yeah, good. Lars, welcome to The Data Stack Show.

Lars Kamp 02:16

Eric, hey, Kostas good to see you both,

Eric Dodds 02:18

For sure. Well, you know, of course, we go back a little ways that tell us about resile Resoto and what you’re working on?

Lars Kamp 02:27

Yeah, Resoto. The name stands for resource tool. And our value prop is we cut your cloud costs by 50%. It’s a Resoto is a cloud as an inventory for infrastructure engineers, and the magic behind cutting your cloud cost by 50%. What is that we signed the Delete expired resources in your cloud, aka zombie resources or drift, along with all the associated resources. One of these is one use case you may have heard about it software, you may have heard about garbage collection. And we do the same for your cloud infrastructure. And when I say cloud infrastructure, I think about AWS GCP, but also Kubernetes,

Eric Dodds 03:08

for sure. Okay, so let’s break this problem down. Right. And so when you think about waste and cloud infrastructure, I mean, you’re talking about an extremely broad footprint, potential, you know, potential problems. What are the things that drove you to actually, like, invest in work on Resoto as a product? Right, like, Were there particular problems that you saw in terms of resource management and sort of said, like, this is a major problem?

Lars Kamp 03:44

Yeah. I, what we saw is a trend to what I would call peak ops, you know, you have all these different DevOps, spin off dev SEC ops tools. And they all use the same approach. There’s usually an agent that you install in your infrastructure to get data out of your infrastructure, and then you take some sort of remediate it’s action that they’re in moments, I’ll be real time operating in,

Eric Dodds 04:14

like alerting against thresholds, etc.

Lars Kamp 04:17

That’s right. That’s right. Right. And but if you look at what has happened to the Kadota structure in the past decade, five years, two years, a number of trends, so number one, we see a smaller size of function, right? And so you’ve gone from a beefy computer instance, to a very tiny Lambda function that may have a lifespan of minutes in some cases, right? But you’re dealing now, as the size of function goes down, the volume of resources has gone up at the same time, right? And so, you know, you made let’s just say you may still be standing out. I’m not making this up a million above, but you’re not spending it on 10,000 resources. Now you’re spending it on a billions of resources that cost you $1 a month, just speaking or

Eric Dodds 05:04

distributing across a larger footprint. Yeah.

Lars Kamp 05:07

So that’s one change, right? That’s sort of that’s driven by the product roadmaps of the cloud providers. The second change is that, well, you know, to deal with this large number of resources, you can’t do that in a constant anymore, you need to do that in code. So the second change is infrastructure as code, right. So you have tools like Terraform, plumie, or CloudFormation, that you use to deploy and manage these resources. And so the life cycle, the life of these resorts, the life cycle of these resources really shortens if they get updated a lot, right? And so now you’re dealing with an inventory that’s not only larger, but it also changes all the time. So there’s this change that’s going on all the time. So that’s the second one. And I would say the third one is that, but people here cloud, they usually think of production environments, like so my app that’s running somewhere. There’s also this world of test and staging environments that has grown much, much faster. Because developers want to have the liberty to experiment, like I’m going to spin up this experiment. And so it’s these three trends that contribute to this trend of growing cloud infrastructure and the intractable complexity that comes with it.

Eric Dodds 06:21

Yeah, absolutely. One question, like when you think about the sort of migration from, like, large resources to micro resources, right. So you were spending, you know, a million dollars on, you know, 10 things, and now you’re spending it on 1000s of things. What’s driving that? Like, what’s the architecture is that, I mean, you said the product roadmaps. But can we dig into that in just a little bit more?

Lars Kamp 06:52

Yeah, if you think about the portfolio’s of the big cloud providers, I think the number of products that AWS offers, I think the number last time I checked was like 382 different products. That doesn’t include all the different skews, the stock keeping units below that. And then maybe the different flavors, right. And so you know, if you start counting the number of API’s that are associated with those products, it goes into the 1000s and 10s of 1000s. Right. And that account developers have developed in response to market needs. Right. And I think one of the trends is obviously the trend to microservices. I know, as we recorded this podcast, there was this little snafu that Amazon Fatboy, they said, Oh, Amazon Prime video, we migrated back to your monolithic applications, but really, you know, its customer demand. And one big shift was the trend to microservices and smaller components of an application.

Eric Dodds 07:56

And on the testing and Dev side, just curious, how much of that do you think is driven by the rise of large scale ML practices inside of companies? You know, so I mean, sort of the extreme end of that is, you know, self-driving cars who need to run, like, really significant tests, and, you know, sort of validations before they roll things out to production. I mean, you’re talking about, you know, things that most companies would only dream of, in terms of production scale models running and they’re doing is sort of a test, is that sort of I know, that’s the sharp end, but is that sort of a driver?

Lars Kamp 08:39

Yeah. And I think actually, that’s where the world of data and infrastructure where they kind of converge. Let’s speak broadly about data products. And I would include machine learning ai o, into that needs to run somewhere, right? And so that you need infrastructure. And usually that’s probably Kubernetes, because you have elastic workloads, you know, you need orchestration? And so yes, depending on the industry, and how mature they are, then yes, I would argue that a lot of those workloads come from being driven by the data world.

Eric Dodds 09:15

Yep. Yeah, that makes total sense. Okay. So Arizona helps you eliminate waste, where in the landscape and what kinds of resource drains are sorry, what are the causes of the maybe the most acute resource trains? Where do those come from? And I guess maybe, to direct the question a little bit is, are those clustering around use cases, right? Like, you know, we need to spin up a, you know, a cluster of, you know, 32 nodes or whatever it is, in order to do this thing. And then, you know, we forget to spin it down, is that being driven by a particular type of use case or is it sort of agnostic? And it’s just sort of a general problem?

Lars Kamp 10:05

I think it’s the latter. It’s more of a general problem. And you nailed it, right? Like there’s a developer who says, Okay, we’re going to test this, we’re going to spin up a workload, right? But there’s also machines, that’s been up workloads, your CI CD pipelines, or auto scaling and all of that, right. And then, as you said, like, some, in theory, these tools should all clean up after them automatically. Reality is, that doesn’t always happen. Right? And then also, developers might forget about it. They’re humans, too, right? They’re under pressure they need to deliver, right. And so I spent my Friday on, you know, finding my next experiment next week, you know, to help the company ship products? Or do I spend my time sifting through my consoles, finding whatever needs to be cleaned up? I think the answer is clear. Right? The answer is very clear.

Eric Dodds 10:57

Yeah. How is this? I mean, what we’re talking about here really is technical debt. Right? How is this? Okay. Yes, I’m interested in this.

Lars Kamp 11:11

Yeah, I guess that’s one way of looking at it. Right. But I think there’s always going to be technical in anything good software and data. And I think the way we like to look at it is, we want to that at the end of the day, this comes down to control, right? How do I control this giant pile of resources in my infrastructure? And, you know, on the one hand, you want to give developers the freedom to experiment, it’s in our resources. But on the other hand, as an infrastructure engineer in charge of this, I want to stay in control. And you can give lots of freedom, but then you’re not in control anymore. And if you’re trying to eat too much, impose too much control, then nothing gets done. Right. And so what we’re saying is like, why can’t I have both? Yeah. Right. And then, you know, as we start looking at the problem, well, how can I do that? Right? If I have, if I have this giant pile of resources, then you quickly come to an answer that includes data. And that means collecting data about the state of your infrastructure, not in real time, you know, nod and high granularity, but something like a snapshot AVR. And as I collect that data about my infrastructure, you know, I get a good picture of what’s going on in my infrastructure. Yep. So this whole concept of exploration is something that I think we know from the analytics engineering world and everything that has happened within the modern data stack in the past five years. And I think, as we’ve seen these changes, live infrastructure, we can apply some of these lessons learned to infrastructure data. Yeah. So that’s kind of how I like to look at the world. Yeah, you know,

Eric Dodds 13:09

Let me push on that a little bit more. Because Lars, I know you’re a man of conviction. Do you envision a world where, because I agree with you like the, you know, in the sort of world of like infinite compute in the warehouse, and, you know, analytics engineering, you’re sort of, you know, able to explore with, you know, unbounded vigor, if you can say that, right. And there’s a low likelihood that your SQL queries are gonna, you know, cause someone to tap you on the shoulder and say, like, this is causing a problem, right, there’s probably a lot more happening elsewhere. Is it this that you envision the same thing on the infrastructure side, where really, we should look at, we should operate as if these are infinite resources. And we have tools that help us manage the cost control on the back end, as we explore what is possible with scale and experimentation? Or do you have a more measured approach where, you know, you need to sort of consider the constraints going in?

Lars Kamp 14:21

I think it’s the former. Right? You don’t want to put boundaries on experiments. I mean, you kind of have to write but

Eric Dodds 14:31

sure, there are physical limitations. Yeah. Yeah. But spinning up and spinning down clusters, and worrying about the cost afterwards. I mean, in my mind, and I’m, you know, I’m obviously showing my guard here, but in my mind, I mean, we shouldn’t sort of spin up and spin down and like, do a spike and do a huge experiment and worry about the cost to me. I mean, that’s a huge accelerant to accompany if you can sort of control it?

Lars Kamp 15:02

Well, let’s go. Okay, so going back to the problem, giant pile of resources, lots of experiments that are sort of driving that. But we don’t want to go back and say, Okay, now you cannot do an experience anymore, right? But we want to be in control. We want to know when that happens. And I think if I can find a way to give my development team the liberty to use all the tools at their disposal out there with all the different cloud products, then I think that’s to the benefit of the company. Right? But now it’s like, what do I do to stay in control? Right? And I think the existing approaches include more ops tools, like, okay, let’s monitor this let’s instrument that let’s deploy an agent there. Right. And I think what we’re proposing is what our conviction is here with Resoto is, well, look, there’s a place in time for tools that give you real time data with high granularity, right, and that’s probably for your production applications. But for everything else, you know, you probably don’t need real time, and you probably do not need like, second granularity. A snapshot is enough. And I think that’s a concept, you know, this The Data Stack Show, and I think it’s a concept that will, I would expect, it’ll resonate with your listeners, it’s like, we have this in analytics, right. And so where I run batch shops, from all my sales and marketing systems, and I unify all this data in my Snowflake or Redshift cluster, and then I analyze it. And then I apply the insights from my systems, either in a dashboard, or maybe I use something like reverse ETL, where I make it actionable, right? And so I think that’s that chain, you know, ETL my data, put it into a singular inventory, analyze it, create metrics, react to those metrics, put it back into production action, that’s something I think we can apply to the infrastructure world, right? And so the basic concept here, then will be to say, okay, so you, dear developer, you have your account, you have your infrastructure resources, and you go crazy, go at it, right. All we need to know is what exactly is happening with your infrastructure. And then what we do is we collect data from your infrastructure, we can go into detail how exactly we do that. But at the end of the day, it’s almost like data integration for infrastructure engineers, we now go into your infrastructure, we call the cloud API’s, the same API’s, or Terraform, or Paluma used to deploy resources. We call the same API’s now, for data extraction, like, tell me about these resources that are running? Yeah. Tell me about their configuration. Tell me about your state, right. And we put that all into a single repository.

Eric Dodds 17:51

Yep. That’s fascinating. I mean, there really seems to be a pretty clear parallel to sort of analytics on the modern data stack. Let’s step back just a little bit. How our SR ease or infrastructure engineers doing this today, I mean, it’s obviously a problem, or you wouldn’t be trying to build a solution for it. And it’s very compelling to hear about, you know, I mean, I think about it almost like an executive dashboard. But for resource management, where you say, this trend is concerning. And we need to go back and understand, you know, the lineage and the cause of this. And so we’re just going to trace it back and fix the problem. Right? Yeah. Ironically, similar to bi, but you’re building a solution. So how are people solving this today? I mean, one thing he mentioned was a lot of monitoring tools, which obviously is not, you know, helping, maybe that makes it more complex.

Lars Kamp 18:53

Yeah. And we can go through the options, and maybe talk a little bit about the pros and cons of these different options. So number one is, as he said, like these X, I call them x ops tools, right? So different operational tools, think it’s in the name. It’s not an analytics tool. It’s an operational tool, right. That collects data in various ways from the infrastructure for a very specific and opinionated use case. That’s one. There’s definitely the world of scripts, where infrastructure engineers have built their own little, right? Use some sort of governance tool. Can cloud custodian is a good example. So yet, the world of scripts, basically YAML is right. The third one is the world of consults. But that is also very constrained. Because if you think about it, if these companies operate, even at the startup, right, you operate in different regions, you know, each developer gets more than maybe an account. And sort of the number of combinations you go up goes into 1000s and 10s of 1000s. Right? And so that stops working, but those are the Three things that we see today. And then the fourth one is some of the cloud providers, you have native cloud provider products. Google has a product called Google Cloud asset inventory. They do something similar to what resulted us. And lo and behold, extract the data into the query instance, right? AWS has a product called AWS config, that extracts configuration data from your resources, and stores it in an S3 bucket. Right. And from there, you can query it with Athena, and you can visualize it in the QuickSight dashboard. So I think the parallels to the modern data stack are pretty obvious to this world here.

Eric Dodds 20:43

Yeah. Okay, two more questions. One is just my curiosity. And the other one, I think, will be a great handoff to cost us. The first one is how big is this problem? I mean, cloud costs are a very hot topic. I mean, there’s obviously macro economics, you know, influence to this where, you know, data leaders, infrastructure leaders are trying to control costs. But regardless of the macro environment, you know, we sort of have this weird world of infinite scalability, but it can guide us. So how big is the problem?

Lars Kamp 21:27

I think everyone has this. So no matter if you’re small or big, it’s just like, how urgent is that of a problem for you? Right. And I think you nailed it. Right. So macroeconomic changes. That’s definitely one driving factor. I think it also depends on the industry. Like, if you’re a SaaS application, then probably cloud cost is a first order business problem, right? Yeah. Yeah. Gross Margin. Gross margin, right. And so I think everyone is affected by it. If we talk to users who are open source users and customers today, I think the common theme as always, like, Gosh, I wish we would have done this two or three years ago, this being putting something into place that prevents sprawl in the first place. It doesn’t just react to it. You know, that’s also one of our underlying principles. Like today’s approach to anything security or cloud costs, is like, Okay, we wait for it to happen, you know, we drive the car off the cliff, like I’m exaggerating, right? Again, we got to take action. Right? Yeah, sure. Yeah, it’s like, oh, we can worry about this later. We’re growing. What we’re saying is, well, you can actually have both rights, but just prevent it. Don’t even Yeah, don’t even wait for the sprawl to happen.

Eric Dodds 22:57

Yeah, well, okay. So I have a sort of a one b question here. This world of sort of infinitely scalable resources, I mean, people who have sort of, you know, recent experience here, or, you know, sort of young in the industry, you know, maybe that’s very common to them. You know, I think people who’ve been working in infrastructure for a long time are very sensitive to cost control, because that was a very big problem, you know, not too many years ago. But how many? You know, one thing that’s really interesting, when you think about this sprawl, this problem across a very complex infrastructure, I mean, I think the example you gave about sort of moving to lambdas, even, you know, the sprawl is unbelievable, and has actually happened very quickly. There are probably really smart infrastructure engineers who don’t necessarily have the, you know, sort of, you know, innate sense of how to manage that sprawl or even the experience to know when slippage is happening. Do you see that as a big problem? I mean, on some level, we’re talking about drastically increasing complexity. And you have really smart people where maybe they are, it’s very difficult for them to detect not because they’re not smart, but because there’s slippage happening across a million different vectors on a small scale that add up to a pretty big problem.

Lars Kamp 24:30

Yes, you nailed it. And it’s not just costs. It’s security as well. And I think at some point, we may want to talk about what this common data layer, interest rate, this common infrastructure data layer, what problems that layer can solve for us. But what you said like, it’s exactly right. And I think the actual problem there is, executives awareness That’s a little bit, you know, every time we go through a platform shift, you know, it’s like, Oh, 15, you know, 15 years ago or whatever, 10 years ago, you know, you, you’re a CEO of a company. And all of a sudden, you needed to understand what mobile ads are, right? Oh, it’s a new distribution channel, right? It’s like, you had to really dig in and start to understand the company, we chose not to understand that the CEOs, they went out of business, right, yeah. And I think we see the same going on right now. With, you know, chat, GPT, four, and all these things like, you know, as an executive, you need to familiarize yourself with these things, to know how they impact your business. And I think it’s the same for infrastructure. And what I observe, and this is also a little bit of just my personal opinion, is that, number one, tech execs are not always very aware of what’s going on with their cloud infrastructure, I think that needs to change. In general, they look at developers as a productivity asset. They’re building code, we’re shipping products, whereas an infrastructure engineer like an SRE is more looked at as a cost center. Right? So we’re trying to hire lots of developers. But we’re going to try to limit the number of ESeries. And there’s not a single SRE and infrastructure engineer that I know who’s not stressed out. Yeah, sure. Right. They’re all the ones who are holding the ship together. Right. And Something’s gotta give. And usually, you know that something is you have a tarp Bill, you know, security and all of that. And

Eric Dodds 26:25

Okay, so question number two. And this is where I’m going, I’m going to need Kostas to jump in here. But

Kostas Pardalis 26:31

This is your third question. No, I

Eric Dodds 26:34

No, I said 1-A and 1-B. Oh, god.

Kostas Pardalis 26:37

Wow. Okay. All yours?

Eric Dodds 26:42

How does Resoto to actually solve this problem? There you go, Kostas. Here’s your second question.

Lars Kamp 26:52

is that your Oh, that’s just Okay. Great. Yeah. So yeah, I don’t want to turn this into a commercial for Resoto. I’m actually way more excited to talk about solving this problem with data. And I think that’s the fun part of the show that we can talk about now. Right? With fighting the problem, right, lots of sprawl, we don’t know what’s going on in our infrastructure, it causes all sorts of problems caused by security. And how do we get back in control? And the point number one is, well, I need to have data about the state of my resources. How do I get this data? And when you look into acquiring that data, like with anything, the data acquisition part is the hard part, right? And what we have done is, we have built a set of collectors that call cloud API’s, and collect metadata from these top API’s. What’s metadata, right? Let’s take it’s something like, Okay, I have an EC two instance, I have an EC two instance. What time is the start date? What time do we see? Seed first name infrastructure? How many cores does it have? What is the attached storage volume? What VPC does it run in like, it’s so it’s information about the state and the configuration of the resource, but also the relationships of that resource to the other assets in the cloud. And that’s really an ETL process, right? So these collectors, they run on the schedule, by default. With us, it’s one hour, but you know, you can prep it up to whatever fits your needs, 30 minutes, 20 minutes, 15 minutes. And they run these curves, you know, there’s a worker that runs, and we extract this data, and we put it into a single place in our place. In our case, that’s a graph database, not a clot warehouse. But there’s also ways that you can export it to a Snowflake cluster or S3. We have a product called Cloud to sequel, which obviously, as the name suggests, transforms the data into tables and rows. But for the core product Resoto, we chose a graph database. So we could talk a little bit about why we did that, but I think, you know, so the listeners of this show will come more modern data stack. I think the part that will resonate like well, you know, look, they’re connectors are we not to talk to cloud API’s, extract data, test the data, transform it into a unified format, and put it into a single place.

Kostas Pardalis 29:24

Lars? Now I think I can ask my questions. Right. Right, Eric, am I allowed? Yes. So Okay, before we get deeper into the Resoto, technical details, I want to ask you something related to the conversation you had with Eric carrier. So you mentioned the complexity around the cloud infrastructure today, right? Yes. And I think pretty much like every person who has worked, let’s say in this industry, for the past like 20 years. You know, that’s what we’re trying to do is add more and more abstraction layers to simplify the way that we interact with infrastructure, right? Like from bare metal day, we have server plays with Lambda functions and all that stuff, right? Yes. Yes. So my, my question, there are two questions here. Actually, the first one is, to me, it feels like there’s a kind of paradox here, right, like one we are trying to reduce, let’s say, the surface area of how we interact with infrastructure by all these abstractions. But at the same time, it becomes harder and harder to actually understand how the infrastructure we are using is operating, and is part of our product, right? And that’s what I like to call this gap. And so why do you think this is happening?

Lars Kamp 30:50

Well, you mentioned that some of it is driven by this by these different levels of abstraction. Right? So this evolution from bare metal to today, we have Kubernetes, right? So we’re running these different pods, they run on some sort of machine that we don’t even see or know of, right? Even just the question is like, Okay, what is this pod running on one machine? Right? That’s not straightforward to answer, especially if you have 1000s of firms. Right? I think that’s these different levels of abstractions. Stackdriver the second one is that, ironically, you know, there’s this thing called the well architected framework that AWS has, and that suggests to separate your workloads into different cloud accounts, into different regions, right. And that happens for control reasons, for security reasons, for failover reasons, all of that stuff, right. And so what on one end, is a really good principle to apply to be, you know, Jeff, is secure infrastructure, to have a resilient infrastructure, to have a scalable infrastructure just adds to the amount of fragmentation and therefore loss of control over the resources running in your infrastructure.

Kostas Pardalis 32:05

It makes a lot of sense. And okay, like, from the SRA point of view, let’s say, we have a person who is at least aware of, like, all these different resources that are participating in this infrastructure, right. But they are not the only people who are interacting without, right, like, the rest of the engineers are actually interacting even indirectly with all these things. So from, let’s say, the SRE perspective, let’s say we are using it to like Resoto, we have a much deeper understanding of what is happening there, like the behaviors that our engineers have with all these resources? easy or difficult it is to communicate these things back to our product engineers, because, as you said, like SRE is not enough, right? They have many things to do. And the complexity of like, the problem they are dealing with is like, exploding, right? Yes. How do we communicate to the rest of engineers about things that like results or are helping to solve? Yeah,

Lars Kamp 33:09

I obviously love talking about Resoto. But I think and let’s abstract the problem, the solution and how we solve it, right? I think what you want to do is make engineering efficiency a KPI for your product engineers. Yeah. And one of the problems with that is that they have zero visibility into the cost of a resource, the lifetime of a resource, right? There are other tools out there that solve the deployment problems like well, if I deployed this, how much actually does this cost me? Right? But just making that part of a good engineering habit? Efficiency? I think that’s the first change, we need to do some hands out some don’t. Right? And then we can debate about okay, how exactly do we do that? Right. And, for instance, this is what I give you as an example of what one of our customers has done with a company called D two IQ. They are a managed Kubernetes provider. They introduced a very simple process. By introducing tax you know, taxes are basically JSON objects attached to a key, a key value store, right, a third key value attached to your resource. And they chose two tags, a name, the name of the engineer with deployments, the source, and an expiration date of the resource, meaning and they have certain rules and certain accounts like look, you know, if you’re in these accounts, no resource should live longer than two days or should live no longer than Friday night. 7pm of the week it was deployed, right? And that’s a date, right? And resented and so the name is absolutely required because If the resource is running, and I’m an Esri and I have developers sitting across the globe, I want to know who deployed it, I want to have a quick way to talk to that person say, Hey, what are you doing with this? That’s number one. Number two, the exploration tag is just the way to say, look, this is the last Marketo of the resource. And once you go beyond that date, we’re going to team this resource up in tests, not in production, right? And so what are the users out of four? Well, number one, once the resources are deployed, we check, they use the result or to check if every resource adheres to those two principles to those two texts. And then two things happen. If the tax or miss is correct, if they’re incorrect, they use the result to correct the tax, there’s a little bit of logic, you know, people make typing errors and all sorts of mistakes, right? And so we correct those resources. For instance, if the expiration date is longer than the longer the policy, we automatically shorten the lifetime of the resource to the max allowed. Now, if none, if neither of those two tags have been applied, due to IQ, just delete the resource and test. And I know that may seem draconian to some people, but we’ll talk about that a little bit. They had huge efficiency and developer productivity improvements, you know, and at first, they said, like, oh, gosh, you know, this isn’t that I can’t work like that. But it turns out, you can write, and just those two simple tags, an owner, write the name and owner, and the expiration date, and then deleting the resource once it has reached its expiration date, has done wonders, right. I think if there’s a specific case, I think they save 78% of their infrastructure spend, which is unheard of, right. So it doesn’t need to be complex, right? It’s like if you change the philosophy, and you make it a KPI of the engineers, and then you give them the tools to reach that KPI. A wonder it will happen, you know, it will happen. Yep. 100%. Yeah, that’s awesome. And let’s go back to like Resoto now and the technical side of things. But

Kostas Pardalis 37:07

It sounds like we are dealing with a data problem here. So when you’re talking about extracting data from the cloud provider, right, like, probably, you know, much better than me, but AWS has hundreds of products, right? Yes. One of these products, let’s say, a separate data source for you are the differences in terms like, how does data look like and how do they differ from each other? Because they are products that we are talking about? Right? Like we have things like CloudFormation, which is one product to use it to, which is another very different kind of resource, right? How do these things work? Like how the data looks like and how REITs this data model has to be in order to represent all these products? Yeah,

Lars Kamp 38:05

yeah. It’s a zoo. Right? So let me kill the audience here a little bit around the product, what’s the product? What’s a cloud product? Right? So I think AWS’s overall poor portfolio is something like, I think 382 different products, right? And then let’s take one product as an example, compute instances, right? If you double click EC, to Elastic Compute Cloud, if you double click on that, then EC two has, like, I think it itself has like 200 Different computer instances, right? So it’s, it goes up. And then each product has its own set of API’s. As I said, those API’s are usually optimized for deploying resources less so for extracting data. And it also depends on the cloud provider GCP, they came later to the market, they’ve done a lot of things, right. When it comes to their API’s, they’re a lot more consistent. But for AWS, on the other side, things can be pretty inconsistent. And what do I mean by that? Let me give you a very specific example, if I just want to know the age of a resource, like if I talk about the lifecycle of my resources, I want to know, on average, how old are my resources? Right? Why would I want to do that? Why would I? Why do I want to know that? Because, well, you know, if my resources tend to be old in terms of like months, and they don’t tend to be updated frequently, then it’s probably an indicator that we’re not doing a lot of development, right? On the other hand, if they have a short life cycle, they get updated frequently. The great indicator of lots of development activity, the how do I get an age of a resource? Well, I need some sort of timestamp, right? Well, AWS does provide that timestamp. But let me give you two very specific examples for timestamps. So EC two has a date timestamp, right the computer instance And it’s, it’s, I think, what’s the ISO norm called 8601? Right? So it’s a string, you know, it’s a year, month, day, hour minute second like that, that’s a timestamp. So I can easily calculate the delta to today’s date, or today, the time right now, then there’s a second product, I’m just picking two random examples. SQS queue, right, they have a property called Create a timestamp. And that value is the number of seconds that has elapsed since the Unix epoch time, which is zero o’clock on January 1 1970. Right. And so you have these two different, you have three different products with two different API’s who kind of tell you the same thing, which is like, you know, when was this resource created, but in completely different formats. And that happens across hundreds of resources and 1000s of API’s. So extracting data from these cloud providers is not really straightforward. You need to put a lot of work into building the connectors and understanding the data you get, and then extract it in a way that it’s consumable for the user. It was a little bit of a long explanation, but I hope that explains the problem a little bit more in detail. Why is this hard?

Kostas Pardalis 41:11

Oh, absolutely. Absolutely. I think it’s, it’s, it’s very interesting. I think like people that never had a reason to go use these APIs, they probably consider, let’s say, AWS as one big API, right? But actually, far away from reality, like, there are actually hundreds of different API’s, hundreds of different data models, and all these need to be aligned, if you’re going to process them in a consistent way. And that’s like the ETL part of the data problem, right. And when we are talking about like data for resources, I would assume that we are talking about, as you mentioned, like the creation date, for example, like there’s some, like a set of metadata fields that describe these resources, like what’s, what other information we are talking about here, like, there are names, dates, that are probably custom key values that people use as annotations, for whatever reason, like they want to you mentioned, to apply processes there. Give us like, a little bit more of like how these data we looked like, even for like an easy to instance, right, like, let’s say the default roll that describes an easy to instance, like, how does it look like? Oh,

Lars Kamp 42:37

I mean, it’s, you’re going down a rabbit hole with this one, right. So the number of fields and properties can go into their dozens, for specific instance. Right. And, you know, we have a whole section on data models on our website, but you know, a create timestamp, last updated name of the resource tags. And then depending on what the resource is, right, also relationships, right, like, oh, you know, what is attached to this easy to instance, right? It’s, you know, dozens or in some cases, it doesn’t have fields, in some cases, it’s like, you know, eight or nine, but it’s a lot, right. And then I think the underappreciated skill there is that if you want to work with this data, and that’s the value that some of these x ops tools provide, that I’ve mentioned, right, is, you have to understand the data model for each individual cloud provider and resource, right. And so, all of a sudden, you have to become an expert in, you know, 382 products, plus the different properties of each API of each resource, right? And so now, all of a sudden, you’re looking at, let’s take 10 There’s a number, right, like, 3820 properties that you need to understand, I think that’s where you quickly run into limits where you’re even, maybe, you know, I think, like for structure engineer just can’t do that.

Kostas Pardalis 44:06

Yeah. And you mentioned relationships, and relationships. And I’m, I know, like, that’s part of the abstraction, right? Use that. You have storage storage that adds to you see two instances, decision two instances might be part of like a cluster that you have, blah, blah, blah, like, all these things have, like some kind of hierarchy or like connection, right and relation. Yeah. I don’t know how explicit or implicit this is, but if I understand correctly, part of what Resoto is doing is allowing you to exploit these relationships and, like, learn about your infrastructure as a whole. So there was a little bit more about that both of like, let’s say how The word looks and how the result or models with words.

Lars Kamp 45:06

Yeah, so I think, good point, the relationships. Let’s talk about the problem there. As you said, right, these cloud assets or cloud resources are really nested. storage volume is attached to an EC two instance, the EC two instance runs in a VPC, the VC, the VPC runs in a region and the region belongs to a cloud. Like it’s all nested right there, maybe there’s an IP address, obviously, that belongs to the EC two instance. And finally, either things like I am rules or policy as access policies, which can be nested tubes. So the complexity gets high quickly. And understanding these relationships is beneficial for a number of reasons. Number one, you’re just asking a question, it’s like, you know, how many? How many resources do I have? Or what is everything behind this IP address? Right? If I have these relationships, that’s what I, that’s what these relationships Tell me. But also, if I want to clean up my resources, right, so I can look at this lowly EC two instance, I’ll just keep going back to the EC two instance, because everyone’s familiar, I would assume with that product. You know, I can now look at these lowly, easy to understand things. I mean, it looks at us, I can probably delete it, right. But what you don’t know when you do that is something that’s called the blast radius. Like, if I delete that, what else will go down? Right? And you just don’t know, you don’t know. Right? You don’t know without the relationships. And that’s why there’s so much value in capturing the dependencies and the relationships of these assets.

Kostas Pardalis 46:57

And how did you do that with the result?

Lars Kamp 47:00

Yeah, so we have a data model, where we do all the upfront heavy lifting, and meaning when we support a resource, we look at the API, we look at the resource and all the different properties. And we map that to our data model. And then we also map the dependencies. It’s a little bit of a visual thing, I think it’s best to check out what this looks like in reality in our Doc’s, but it really is like a graph. It’s a graph, right? And we map that upfront. And this is, it’s a statically typed model, right? And so you know, if we know, for instance, here and compute one, since it has a certain number, of course, well, that’s an integer, right? And so when we put a lot of time into understanding the data models, we map those relationships statically typed, we collect the data. And because it’s statically typed, it also allows us to index the data real quick. And so search becomes really fast versus like, you know, running some batch jobs with SQL queries.

Kostas Pardalis 48:11

And how do you query this data, like using results? Or because we are thinking like, oh, well, the graph data model here, I would assume probably you’re using a graph database, or? Yes,

Lars Kamp 48:24

That’s correct. Yeah, so we’re open source, right. And the graph database we use is called a rango. dB. There’s other products out there. I think most people when they hear graph databases, they will think new for J. Right? They kind of pioneered the model. And, and, you know, graph databases are pretty powerful for very specific use cases. But they’re, you know, a graph query language is pretty hard. It’s good unless you do it every day. I’m not sure it’s worth the investment to learn that language. So what we’ve done is we’ve created our own domain specific language. It’s search syntax that simplifies a lot of these things. And it’s actually understandable for you like terms like search, we offer full text search, right? And so it’s this domain. It’s this search syntax that you can use. And anyone who’s familiar with using the command line tool will be able to pick it up very quickly. And in the future we’re working on our Jinja templates so that you don’t even have to really know like, you know, do you write in Jinja. And then it just automatically creates the syntax? Are Resoto, the tax?

Kostas Pardalis 49:33

Yeah. And one last question from me, and then I’ll give the microphone back to Eric. You mentioned that outside of Resoto, there’s also another product called Cloud to Cloud sequel, right? Yeah. Yes. Well, how does this work? How do you get from these clouds? I’m sorry, is this graph data model industry equal? Like, how do you do that?

Lars Kamp 50:02

Yeah. So there is no existing world of analytics engineering, right. And we have all the data infrastructure in place already. And there’s nothing that keeps us from working with infrastructure data the same way we work with data from your CRM from Salesforce, Google Analytics Marketo. And, and that’s why we introduced cloud to the sequel. And it basically, it all of the things that I told you about the graph and the dependencies and all of that, we just flattened the data out, right, and put it into tables and rows, and then you can export it to a destination of your choice, right, and we call it an SQL because then you can export it to Snowflake and to Postgres, also S3, right. And that’s what we use that product for now, you will lose these relationships, right? From the graphs. And they’re useful for use cases, like, obviously cleanup and security. But then in theory, you can rebuild them by writing your own join across these tables, and you can put it into a DBT model. And then you expose it to your Metabase dashboard. So that’s an option. We wanted to give existing analytics engineers. And that’s why we introduced cloud to the sequel.

Kostas Pardalis 51:16

What’s it called? years again? All right, Lars.

Eric Dodds 51:22

I guess the question is, how can data teams and infrastructure engineers in general, and you know, we have sort of more data engineers, and data teams, you know, as listeners for the podcast? I guess the big question is, the cloud infrastructure ecosystem is expanding at an alarming rate. What should they think about that? And how can they sort of plan for what’s coming in the next several years, especially as it relates to costs? Because it’s going to be a problem? I mean, you know, AWS is a great example. But you know, all the other cloud providers are going to become just as complicated over time.

Lars Kamp 52:09

Yeah. You know, I would approach this from a perspective of what we need to deliver our customers, I don’t mean to go too far away from the actual infrastructure. But we know that, you know, to stay alive, these companies need to ship and develop a lot of new digital products, right? And so that I need to empower developers, they need to be able to spin up infrastructure, I do not want to put too many blockers around them. So this is the world I want to live in. And I want to give him the freedom to try out new tools and new paths that the cloud provider has given me. Now, how do I stay in control? When I do that, will this world never go away? There will always be more innovation, more product, right? And how do I stay in control? While I do that? And I think it’s the answer as we discuss them. This includes data, but it’s to say, like, look, there’s a time for reactive intervention, like real time high granularity data, right. And there’s tons of great tools out there that do that. But there’s also a time just for exploration, for tracking long term trends. And for using data to take remediation it’s action that steers my infrastructure back on the path that I want it to be on without having some sort of incident. Right. And I think that sort of that’s the philosophy that we’re proposing. Right? You use data as an input to write code so that your developers don’t have to.

Eric Dodds 53:41

Yeah, I love it. All right. Well, Lars, this has been absolutely fascinating, an area that we haven’t covered a ton on the show, but has a direct impact on all sorts of data stuff across the stack. So thank you for educating us, and sharing your insights. And best of luck with Roseto.

Lars Kamp 54:00

I appreciate it always good seeing you guys. And as a listener to The Data Stack Show, longtime listener myself, I’m actually very excited that I can be a guest now.

Eric Dodds 54:10

Well, it’s a true privilege. And thank you for your support.

Lars Kamp 54:15

Thank you guys.

Eric Dodds 54:17

Got this. What a fascinating conversation with Lars. From Resoto. I learned a huge amount. And I think my big takeaway is that, you know, I kind of went into this conversation expecting to be, you know, astounded by the complexity of sort of resource management across the entire ecosystem of infrastructure and tooling, which I was. It’s a very large and complex problem. But the bigger thing was how similar the issue is actually, to sort of a standard data flow in terms of the solution, right? And so LoRa is kind of describing it. As you’re, you’re sort of ingesting inputs, you’re doing some sort of modeling, and then you’re pushing those back out. Right. And so when we think about the modern data stack, I mean, that’s, you know, bread and butter for a data engineer dealing with customer data, for example. So, it really struck me that sort of, you know, there’s an elegant architecture that already exists for solving this, like a pretty complex problem.

Kostas Pardalis 55:20

Yeah, yeah. 100% I think outside of like proving today that resource management is a data problem, I think we also prove that like, everyone is a data engineer, right, like, every software engineer is a data engineer at the end, like you need to in a way like many of the problems that we are talking about, like solving actually relate, but sort of like contain a big part of like data engineering work has to be done, like data has to be exported, there has to be transformed somehow models. And, of course, being exposed to the data consumer, like for one like to be created there. And I think like, especially like, I mean, okay, he will sound like it has been said, like many times I think like already, like wearing like this, like decades of like, everything’s going to be like data. But I think we start seeing that a lot. And we start seeing that by actually getting into domains that don’t necessarily feel like they are, you know, data problems or data related, like technologies that have to be built. But in the end, that’s exactly what is happening. Right. And I think, especially with AI and all the stuff that’s happening right now, we are going to see more and more of, let’s say these domains come back and like being rebuilt and rediscover the data problems that can be defined there, including, like sales marketing, like pretty much everything. And yeah, it was super fascinating. Like we should get Lars back again. He’s a good friend. And I think whenever we talk with him, we always come up with very interesting insights.

Eric Dodds 57:12

No, I completely agree so much to learn, and would love to have Lars back. Such a deep thinker about these problems. Go ahead and subscribe to the show. If you haven’t, look it up on your favorite podcast network to tell a friend and we will catch you on the next one. We hope you enjoyed this episode of The Data Stack Show. Be sure to subscribe to your favorite podcast app to get notified about new episodes every week. We’d also love your feedback. You can email me, Eric Dodds, at eric@datastackshow.com. That’s E-R-I-C at datastackshow.com. The show is brought to you by RudderStack, the CDP for developers. Learn how to build a CDP on your data warehouse at RudderStack.com.

Each week we’ll talk to data engineers, analysts, and data scientists about their experience around building and maintaining data infrastructure, delivering data and data products, and driving better outcomes across their businesses with data.

To keep up to date with our future episodes, subscribe to our podcast on Apple, Spotify, Google, or the player of your choice.

Fill out the form below to get a monthly newsletter from The Data Stack Show team with a TL;DR of the previous month’s shows, a sneak peak at upcoming episodes, and curated links from Eric, Kostas, & show guests.